Edge AI Era

Together with AAEON, an Asus group brand, ICC is committed to cutting-in computing technology and developing new solutions for customers who are constantly innovative and engaged in a variety of fields. With AI technology now having an increasing impact on the way people work, we are working to develop the hardware to support today's and tomorrow's AI systems. Because AI Computing requires an incredible level of processing power, traditional AI systems work by sending data to an instance to be processed. A decision on what the system should do is sent back to connected devices. However, there are several problems with this approach. Due to network signal coverage and battery capacity on mobile devices, it is not always possible to ensure that devices connect to the cloud. There are potential security issues when you send large amounts of data to the cloud. Finally, it can only take milliseconds for data to be processed by a server, but even such a delay can be disastrous for all applications, including robotics and driver support systems. With Edge AI, devices process data locally and make real-time work decisions. In addition to being faster and safer, edge AI can also help reduce power consumption.

What is Edge Computing and why is it important?

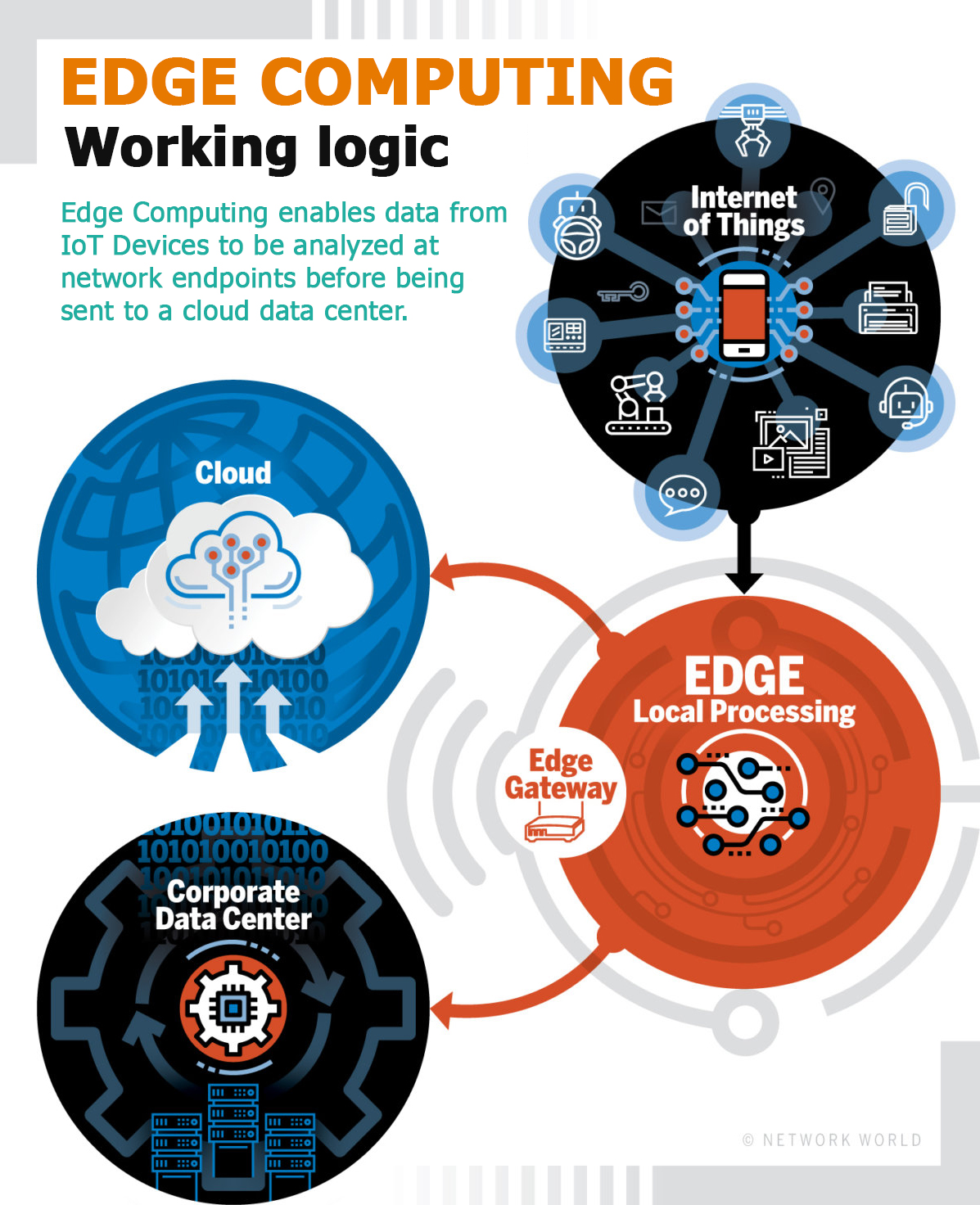

With the deployment of IoT devices and the arrival of a 5G fast wireless network, computing and the placement of analytics close to where the data was created are a solution created by Edge Computing systems. Edge computing transforms the way data is processed, processed, and delivered from millions of devices around the world. The rapid demand of Internet-connected devices (IoT) continues to support Edge Computing systems as well as new applications that require real-time computing power.Faster network technologies such as 5G wireless allow Edge Computing systems to accelerate the creation or support of real-time applications such as video processing and analytics, automated driving cars, artificial intelligence, and robotics. While edge computing's initial goals are to address bandwidth costs for data traveling over long distances due to the growth of ioT-generated data, the rise of real-time applications that need to be processed at the edge will push the technology forward.

WHAT IS EDGE COMPUTING?

The concept of Edge Computing is defined as "part of a distributed computing topology where computing is located close to the edge, where things and people produce or consume this information."

At a basic level, Edge Computing brings computing and data storage closer to the devices it collects than relying on a central location thousands of miles away. This is done so that data, especially real-time data, is not exposed to latency issues that can affect an application's performance. In addition, companies can save money by processing locally by reducing the amount of data that needs to be processed centrally or in a cloud-based location.

Edge Computing was developed due to the exponential growth of IoT devices that connect to the internet to receive information from the cloud or to transmit data back to the cloud. Many IoT devices generate enormous amounts of data during their operations.

When considering devices that monitor production equipment on a factory floor or an internet-connected video camera that sends live images from a remote office, problems arise when the number of devices transmiting data at the same time increases, although a single device that generates data can easily transmit data over a network. Instead of a camcorder transmits live images, it's in a situation where it's with hundreds or thousands of devices... Data quality is not only damaged by latency, but the costs of bandwidth can also be enormous.

Edge Computing hardware and services help resolve this issue as a local processing and storage resource for many of these systems. For example, an edge gatewaycan process data froman edge device and then reduce bandwidth requirements by sending only relevant data back in the cloud. Or it can send data back to the edge device for real-time app needs.

These edgedevices can contain many different things, such asan IoT sensor,an employee's laptop, the latest smartphone, a security camera, and even an internet-connected microwave in the office lounge. Edge gatewaysthemselves areconsidered edge devices within an Edge Computing infrastructure.

Why is Edge Computing important?

For many companies, cost savings alone can be the driving force of building an Edge Computing architecture. For many applications, companies that adopt the cloud may have discovered that bandwidth costs are higher than expected. Nevertheless, the biggest benefit of Edge Computing applications is the ability to process and store data faster and provide more efficient real-time applications that are critical for companies. Before Edge Computing, a smartphone that scans a person's face for facial recognition needs to run the facial recognition algorithm through a cloud-based service, and it takes a lot of time to do so. With the Edge computing model, given the increasing power of smartphones, the algorithm can work locally on an edge server or gateway, or even on the smartphone itself. Applications such as virtual and augmented reality, self-driving cars, smart cities, and even building automation systems require fast processing and response.

At this point, companies such as NVIDIA have noticed the need for more processing, which is why we are seeing new system modules with built-in AI functionality. For example, the company's newest Jetson Xavier NX module is smaller than a credit card and can be placed on smaller devices such as drones, robots and medical devices. AI algorithms require a large amount of processing power, so most work through cloud services. The growth of edge-processing AI chipsets will provide better real-time responses in applications that require instant computing.

Privacy and security

However, as with many new technologies, solving a problem can create others. From a security point of view, edge data can be laborious, especially when processed by different devices that may not be as secure as a centralized or cloud-based system. As the number of IoT devices increases, it is imperative that IT understands the potential security issues surrounding these devices and ensures that these systems can be secured. This includes encrypting data and making sure that the right access control methods and even VPN tunneling are used. In addition, different device requirements for processing power, electricity, and network connectivity can affect the reliability of the edge device. This makes redundancy and failover management crucial for devices that process data on the edge to ensure that data is delivered and processed correctly when a single node goes down.

NEW AGENDA 5G...

Around the world, operators use 5G wireless technologies that promise the benefits of high bandwidth and low latency of applications, enabling companies to switch from a garden hose to a fire hose with data bandwidths. Instead of just offering faster speeds and telling companies to continue processing data in the cloud, many carriers are working on Edge Computing strategies, especially in mobile devices, connected and autonomous vehicles, and 5G deployments to deliver faster real-time processing on their own. While the initial goal for Edge computing is to reduce bandwidth costs for IoT devices over long distances, it is clear that the growth of real-time applications requiring native processing and storage capabilities will drive the technology forward in the coming years.